- New Research Questions Haynesville Shale Economics

- The Challenges And Costs Of Wind Energy

- New Transport Index A Leading Indicator Of Economy

- Cool Pacific Ocean Says Greater 2010 Hurricane Season

- Commodity Investment Class Earns Positive Review

Musings From the Oil Patch

February 16, 2010

Allen Brooks

Managing Director

Note: Musings from the Oil Patch reflects an eclectic collection of stories and analyses dealing with issues and developments within the energy industry that I feel have potentially significant implications for executives operating oilfield service companies. The newsletter currently anticipates a semi-monthly publishing schedule, but periodically the event and news flow may dictate a more frequent schedule. As always, I welcome your comments and observations. Allen Brooks

New Research Questions Haynesville Shale Economics (Top)

Conventional wisdom says the United States is blessed with 100 years of natural gas supplies due to the success in applying horizontal drilling and hydraulic fracturing technologies to gas shale formations that underlie many of the oil and gas producing formations throughout the country. The drilling and completion success of the nation’s oldest and largest gas shale basin, the Barnett Shale in north central Texas, sparked exploration efforts throughout the country. The industry has found numerous new gas shale basins to exploit. The prospect of this new, huge gas endowment has sparked efforts to find additional markets for natural gas including as a transportation fuel for cars and over-the-road trucks. Gas shale enthusiasts are even suggesting that the U.S. may become an exporter of liquefied natural gas (LNG) to capitalize on this gas bonanza.

Key to the gas shale revolution is the huge initial production (IP) from wells in certain of the newer plays – Haynesville, Fayetteville, Eagle Ford and Marcellus Shales. The high IP rates of these horizontal wells are believed to lead to the recovery of significantly larger volumes of natural gas than can be extracted from vertical wells. The high IP’s and greater reserve recoveries contribute to low industry finding and development costs that make the fields highly profitable even at relatively low natural gas prices. High well reserve potentials at low per unit costs have spawned a gas shale leasing boom, which, due to short lease lives, is stimulating a drilling boom. The downside of this industry focus on shales has been sustained natural gas production that has limited any rise in gas prices since demand has yet to recover.

Last week two different analyses of the Haynesville Shale concluded that the economics of this basin have peaked and it will not become the bonanza producers and investors have forecasted. One of the analyses came from industry consultant Art Berman of Labyrinth Consulting Services, Inc. (http://petroleumtruthreport.blogspot.com/) while the other came from Wall Street E&P analyst Ben Dell of Bernstein Research. Mr. Berman’s research report will soon be available on his blog, but we were provided with an early version. So what’s behind the conclusions of these two analysts?

Both Mr. Berman and Mr. Dell have studied the results of roughly 135 (133 and 136, respectively) horizontal wells drilled and producing in the Haynesville Shale. The recent data shows that the average IP of Haynesville Shale wells is starting to fall and increasing the number of fracture treatments in each well is not helping to improve their output. While the basin’s production has grown dramatically since 2008, it now appears to be holding steady, which obscures the fact that the leading E&P companies are experiencing declining IP’s. In other words, the recent rise in the basin’s total production is largely due to better performance from newer entrant E&P companies and their use of larger numbers of fracture treatments in each well they drill.

Exhibit 1. Production Declines Are Stabilizing Within Year

Source: A. Berman presentation

Mr. Berman plotted the daily production from the Haynesville Shale wells owned by Petrohawk (HK-NYSE), which appear to be primarily in the core (most prolific) area of the basin. As the chart shows, the IP starts high but then declines rapidly. The average daily production line he calculates, which admittedly may be influenced by the natural distortion arising from mathematical averaging, shows a rapid decline before stabilizing within one year of life. If accurate, these wells are reaching stable production much faster than wells in the Barnett, the oldest producing gas shale basin. That may mean lower volumes of gas recovered from these wells.

Based on the well production data, both analysts conclude the core of the Haynesville Shale will be smaller than for the Barnett Shale and with worse well economics. They question whether wells located outside of the core area will be economic at today’s natural gas prices. They also question whether wells will produce the volume of gas initially predicted. If so, the Haynesville Shale will not be as large a gas field as suggested by early entrant explorers.

A conclusion that comes from examining the well locations and their IP’s is that there appear to be faults in the basin defining the core and non-core areas. The production data from wells in the core is better than from non-core wells. This structural definition within the basin suggests that the development of the Haynesville Shale will not be a “manufacturing process” where the key to growing the basin’s production is merely drilling more wells and using the optimal number of fracture stages.

Exhibit 2. Basin Control Negates Manufacturing Concept

Source: A. Berman presentation

Mr. Dell’s analysis of the basin’s wells concludes that there are three distinct areas within the core area along with a large non-core area. Within the core area, according to the data, in 2009 the average IP rate of wells drilled was 9.7 million cubic feet per day (MMcf/d) compared to an average of 6.9 MMcf/d for wells drilled outside the core area. The western core area showed an average well IP of 7.5 MMcf/d; the lowest rate within the three core areas. Since all wells were completed with 11 fracture stages, these production results suggest that the well IP performance is related to geology and not due to less complicated well completions.

Mr. Berman’s analysis concludes that the average economic ultimate recovery (EUR) of wells in the Haynesville Shale is 2.0 billion cubic feet (Bcf). Based on his analysis of lease, drilling and completion, and operating costs, he estimates the minimum EUR breakeven economics at 5.0 Bcf per well. Of the wells he analyzed, only 11% meet or exceed this commercial breakeven threshold.

Exhibit 3. Analysts Hold Optimistic View Of Shale Economics

Source: D. Kistler, Simmons & Company, International

While Messrs. Berman and Dell are critical of Haynesville Shale economics, there remain many Wall Street analysts and industry participants who believe this basin, and all other gas shale basins, will provide long-term profits for E&P companies. One Wall Street firm has estimated the economics for all the major gas shale basins, which includes an estimate of the threshold price needed for profitability. In the case of the Haynesville Shale, the firm estimates the threshold price at $4.40 per thousand cubic feet (Mcf). As natural gas prices are currently in the $5.45/Mcf range, there is roughly a dollar of profit per Mcf. A critical ingredient in this analysis, however, is the estimate of finding and development (F&D) costs. This cost estimate includes all the direct costs such as leasing expense, drilling and completion costs and production maintenance costs, and a share of the corporation’s overhead. In the future, however, there will likely be significant additional costs for new fracturing treatments that will be an ongoing need to sustain gas shale well production. The F&D estimate is also dependent on an assumption of the volume of discovered gas reserves that ultimately will be produced. The greater the gas volume estimate, the lower the F&D cost, and the easier to predict well profitability.

Exhibit 4. Potential Gas Committee Reserve Estimates

Source: A. Berman presentation; Potential Gas Committee

What is the significance for the domestic natural gas industry if the Haynesville Shale turns out to be smaller/less economic than initially anticipated? Early speculative estimates claimed the Haynesville Shale might contain 250 trillion cubic feet (Tcf) of natural gas. Last year, when the Potential Gas Committee reported that the nation had 1,836 Tcf of technically recoverable gas resources, it also estimated that 616 Tcf of this total was contained in gas shale formations. Based on the early estimates, the Haynesville Shale would account for roughly 40% of this estimated total. If the Haynesville Shale does not contain as much gas, does it call into question the Potential Gas Committee’s total resource estimate? Would that shortfall potentially undercut the universal belief that natural gas, especially given the contribution from the gas shales, will be the bridge fuel from an economy relying on dirty hydrocarbon fuels to one powered by clean fuels? It may be early to draw definitive conclusions, but one at least needs to ask the question: What if?

The Challenges And Costs Of Wind Energy (Top)

As the reality of the United States pushing forward to develop more wind farms both on- and offshore, the question of how they may change the economics of power and local tax revenues is growing in importance. One interesting development is a proposal being considered in Wyoming to institute a tax on wind energy producers. In a state whose government is heavily dependent on taxes on nonrenewable energy sources, the prospect of wind power projects displacing that income source creates a potential long-term problem. The wind tax proposal being considered would add a $3 per megawatt hour fee to the price of power. That fee would equate to about a 5% severance tax on minerals. The proposal envisions that the revenue raised would be split 60-40 between the state and local governments.

At the moment, Wyoming wind farms pay property taxes and when an exemption ends in 2012, new wind developers would be required to pay a 6% sales tax on equipment purchased for projects. Last fall the Wyoming legislature’s Joint Revenue Committee voted down two proposed bills that would have implemented a state tax on electricity generation while also providing exemptions and credits so other power generators, such as coal-fired power plants, would ultimately breakeven. The bills were rejected because they were considered too complex and legislators were concerned about levying taxes on the fledgling wind power industry. What is intriguing is seeing a government looking far down the road to the day when renewable energy limits its ability to raise revenues from taxing extraction industries, which accounts for 20% of the government’s income. So will wind power be free in the future as is postulated by many of the proponents for more wind farms?

In Rhode Island, the debate has shifted to how much is too much to charge for electricity coming from an offshore wind farm. A contract was recently negotiated between the offshore wind farm developer, Deepwater Wind, and the state’s utility, National Grid (NGG-NYSE). Under terms of the contract, each kilowatt-hour (kWh) of surplus power sold to National Grid will be priced at 24.4¢/kWh when the project commences supplying power in 2013. That price is programmed to escalate at 3.5% per year in each year of the 20-year contract. As part of the Rhode Island Public Utilities Commission (PUC) contract approval process, it has held four public hearings and has accepted written testimony from experts and other interested parties. At a March 9th hearing, the panel will hear the witnesses’ statements and question them before rendering a final decision by March 30.

The PUC, which represents the public interest, has retained an expert witness to opine on the contract price. Not surprisingly, their expert witness concluded the contract price is too high. The expert was asked to compare the Rhode Island power price with those being charged for offshore wind power in Europe and any domestic offshore wind power contracts that have been negotiated. The expert concluded the 24.4¢/kWh price was considerably higher than all the European offshore wind contract prices and nearly twice the rate negotiated by Bluewater Wind and Delmarva Power in 2008 for power offshore Delaware. That contract’s terms were a 13.9¢/kWh charge with an annual escalation of 2.5%.

Exhibit 5. Wind Electricity Prices

Source: ProJo.com

The PUC expert said his analysis concluded the National Grid contract would provide more than a 20% return to Deepwater Wind. He said that if the price were reduced to 17¢/kWh the developer would earn a utility industry-standard 15% return. An 18% return would yield a price of 21.4¢/kWh. In his view, Deepwater Wind should have to justify why it should not accept a lower rate of return.

As expected, the head of Deepwater Wind said the expert had failed to correctly factor in federal tax credits into his company’s financing structure. He believes that if the calculations were done properly, the rate of return his company will earn would fall into the 15% to 18% range. The Deepwater Wind CEO also said the Delaware contract reflected power from a much larger offshore wind farm (70 turbines versus eight in the Rhode Island development) and the utility benefits from economies of scale that translate into a lower per unit charge.

An energy consultant retained by National Grid submitted testimony and described the data on offshore wind pricing as “sparse” because there are no existing offshore wind farms. Other than the Bluewater Wind project, the Cape Wind project in Massachusetts is the only other offshore project under development and there is no contract yet. He did look at these projects and the price for power from an offshore wind farm in the Great Lakes. Electricity from that project is estimated to cost 18.6¢/kWh, but that development will be installed in shallow freshwater, not in deep saltwater in the Atlantic Ocean.

While the battle continues about the appropriate power price in Rhode Island, Cape Wind released a study it commissioned by consultant Charles River Associates on the impact of its wind farm on New England power prices. The report, “Analysis of the Impact of Cape Wind on New England Energy Prices” concluded that by adding this offshore development’s electricity, it would reduce the wholesale cost of power by an average of $185 million a year over 2013-2037. The aggregate savings would total $4.6 billion over the 25 years. The study also concluded that the price of power in New England’s wholesale market would be $1.22 per megawatt-hour lower on average for the period.

Power in the New England electricity market is determined hourly and is set by the highest-cost power plant. Charles River Associates concludes that the introduction of cheaper Cape Wind power will displace higher cost power during certain hours of the day and thereby reduce the average wholesale power price saving New England electricity customers money. In reading through the report, we wonder how they know which hours of the day the wind power will be displacing higher cost power. Secondly, we wondered how they calculate the cost of the standby power we have written about, which involves keeping another power plant on low utilization that inflates its kilowatt-hour price. Finally, we discovered that part of the savings depends on the future price of natural gas and carbon offsets. It is impossible, unfortunately, to determine how important these variables are on the conclusion.

Exhibit 6. Natural Gas Prices Are Forecast To Climb

Source: Charles River Associates

Exhibit 7. Carbon Prices Are Projected To Escalate

Source: Charles River Associates

The gas and carbon prices are all in nominal dollars that have been inflated at 2.01% per year, which is the rate of inflation used by the Energy Information Administration (EIA) in its Annual Energy Outlook forecasts. Another consideration has to be the savings coming from consumption shifts by non-wind power generation. It is interesting that hydro power, another low-cost renewable fuel, has a positive, albeit small, impact on the market. The early years see a reduction in coal-fired and natural gas/oil power, but in the later years these two sources move in opposite directions.

The results of the study show a cyclical pattern in the wholesale price reduction of electricity and in the cost of power in the New England region. We assume the forecasting model used has a business cycle built in as it is hard to conceive there would be so much variation in the price and total cost over time given the steady

Exhibit 8. Costs Benefit From Less Dirty Fuel Consumption

Source: Charles River Associates

upward trend in alternative fuel costs.

Exhibit 9. Substantial Price Savings Come In Later Years

Source: Charles River Associates

Exhibit 10. Steady Upward Trend In Total Regional Savings

Source: Charles River Associates

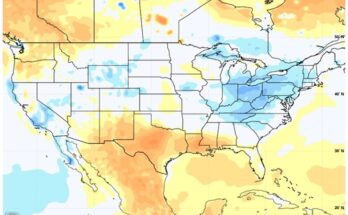

Another interesting study we found was produced by 3TIER, an alternative energy information consulting firm. The study produced a series of maps showing how average wind speeds in the United States differed in 2009 from their long-term averages, illustrating the impact of El Niño on wind power generation in several important regions. This is an important consideration since the government is banking on increased wind power to meet its efforts at increasing renewable energy sources and reducing carbon emissions. As we know, the U.S. has a number of areas blessed with strong wind patterns throughout the year. The wind resource map produced by the EIA shows that the central portion of the country is similar to a wind tunnel and where large wind farms should be located. The offshore regions also represent attractive potential locations for installing wind farms.

Exhibit 11. Central U.S. and Coasts Are Prime Wind Areas

Source: EIA

As can be seen by 3TIER’s map of the wind difference for 2009 and specifically for the fourth quarter, last year was a subpar year for wind power. Since the lack of wind is attributed to the existence of El Niño, we need to consider its future developments as a concern when predicting the amount of wind power we can count on in any particular year. We know the development of El Niños can be predicted. If the nation places a huge bet on wind power in regions of the country susceptible to reduced wind during El Niño years, then we need to factor the weather phenomenon’s impact on the amount of power than can be counted on.

When one examines these two maps, it is clear the blue areas, reflecting 5% to 10% declines from normal wind patterns, were concentrated in the Great Plains states that are the target for wind farm development. The fourth quarter 2009 map showed this Great Plains low wind pattern more clearly, but it also highlighted low wind in Texas, the leading state for installed wind turbine capacity.

Exhibit 12. El Niño Has Reduced Expected Wind

Source: 3TIER

Exhibit 13. Q4 Showed Significant Wind Power Loss

Source: 3TIER

With these maps, possibly the wind industry will be able to site new wind farms in the most optimal locations to insure minimum exposure to El Niño altered wind patterns, something we know will recur numerous times during the life of wind farms. While understanding that wind variability due to natural weather phenomenon is important, we also should address the issue of whether wind is the most cost-effective way to power the United States in the future. The challenge for wind is its variability and intermittent nature making it cost more than people think. That was highlighted by Walmart Canada last week when it announced two renewable energy test projects for stores in Ontario province. Walmart Canada announced plans to install a 20-kWh wind turbine adjacent to a store. This turbine is to produce 50,000 kWh per year or an average of 137 kWh per day. Since the turbine’s rated output is 480 kWh/day, the anticipated output equates to a 28.5% efficiency rating. Walmart Canada did not disclose how much it is planning to spend on the project, but it anticipates powering the store and selling excess power under Ontario’s feed-in electric tariff program.

What is interesting is the popular perception wind power is free. That view was reinforced by a statement by Cape Wind President Jim Gordon, in commenting on the Charles River Associates study on wind’s impact on New England’s power prices. He said, “No one knows how high the price of natural gas and oil will go in the next 25 years but we do know that the price of wind will remain zero.” Gee, if the price of wind is zero, why does Cape Wind need to negotiate a price of 16¢-18¢/kWh for its output?

An interesting study was prepared by the Science & Public Policy Institute titled “The True Cost of Electricity from Wind is Always Underestimated and its Value is Always Overestimated.” While the report does not develop specific numbers on the cost per kWh for wind versus other electricity sources, it examines the factors that influence the true costand value of wind power. Cost and value are two very different issues. The true cost of wind power is influenced by the federal, state and local government tax breaks and subsidies provided to wind farm developers. Additionally, the cost per kWh is influenced by assumptions about the performance of wind turbines, which are largely guesses based on the analysis of wind patterns, electricity demand and alternative power availability. Guesses also have to be made about the total operating, maintenance and replacement costs during the assumed life of turbines; how long they will last; how much electricity they will generate; and what their decommissioning cost will be.

One classic example of the inability to determine the true cost of wind power is its efficiency rating. Wind farms are characterized by their nameplate capacity, but over time we have learned they only produce between 25% and 35% of that capacity. Additionally, because wind power is intermittent it must be backed up with alternate power sources that can meet the utility’s needs if the wind stops blowing or is blowing too hard.

Turbines begin generating electricity with winds of about 6 miles per hour (mph). They reach rated capacity at around 32 mph, but cut out around 56 mph and restart generating electricity at 45 mph. Much of the time wind turbines are not generating any power or only small amounts that are well below their rated capacity.

The variability of the electricity produced by wind plays into the value proposition. The critically important factor affecting the true value of the capacity of any generating unit is how much of the unit’s nameplate capacity can definitely be counted on to be available to generate electricity and how much of the capacity can be counted on to produce at the time of peak electricity demand in the targeted market. In determining availability, the wind turbine must not only be operable but it must have wind. The challenge for peak electricity demand is that it usually occurs on hot, weekday late afternoons in July or August when there is generally little or no wind, therefore no electricity from wind turbines. It is this lack of reliability that devalues wind power compared to electricity generated by other fuel sources. The other plants are capable of coming on stream and ramping up their output on command while wind power cannot provide that assurance. Electric utilities relying on wind power claim that if they have sufficient wind turbines spread over a wide geographic area they should be able to manage the challenge of bringing wind power on stream and ramping it up on command.

The report outlines the tax breaks and subsidies for wind farm developers, which we will not detail. The report’s author pointed to an April 2008 report issued by the EIA indicating that federal tax breaks and subsidies during 2007 averaged $0.2337 per kWh of electricity produced by wind. The author concludes this figure is too low because it did not include state and local tax breaks and subsidies for wind farm developers and the availability of 5-year double declining balance accelerated depreciation for wind farms that is not available for other reliable generating units. He also would include the $1 billion of additional tax breaks and subsidies for wind power awarded in 2009 by the U.S. Departments of Energy and Treasury under various economic stimulus bills.

A concluding point in the report was to showcase the problem of understanding the true cost of wind power given the misleading information being put out by government agencies. It specifically referred to a Department of Energy National Renewable Energy Laboratory (DOE-NREL) graph showing an 80% decline in the cost of electricity from wind. This graph has now been withdrawn, but politicians and the media continue to refer to it. The Department of Energy’s Office of Energy Efficiency and Renewable Energy (DOE-EERE) has recently admitted their data is showing the cost per kWh of electricity from wind is rising and not falling. With government mandates for increased use of renewable power sources, and in particular wind, is the U.S. destined to becoming a high-cost energy country as we turn the power-cost pyramid upside down?

New Transport Index A Leading Indicator Of Economy (Top)

A new index of economic activity has been unveiled that shows a strong correlation to the Federal Reserve’s Industrial Production Index, yet is issued days ahead of that index. The new measure of economic activity is the result of an econometric analysis and is produced by Ceridian Corporation in conjunction with UCLA’s Anderson School of Management and the consulting firm, Charles River Associates. The latest reading from this new index suggests the nation’s economy fell in January after a significant rise in December, with the index falling at an annualized rate of 36.8%. The more reliable three-month moving average of the index was up 3.3% in January versus the 14.6% increase in the previous month.

The index, the Ceridian-UCLA Pulse of Commerce IndexTM, is based on an analysis of real-time diesel fuel consumption data from over-the-road trucking operations tracked by Ceridian Corporation, the credit card processing firm. The index is actually constructed by analyzing Ceridian’s electronic card payment data collected from over 7,000 fuel outlets located along the major interstate highways that captures the location and volume of diesel fuel being purchased by over-the-road trucking operations. It provides a real-time measure of the movement of products across America. The data is also analyzed regionally by the nine U.S. Census regions.

The January 2010 index stands 3.6% above January 2009 and its recent performance is similar to the year-over-year changes experienced in pre-recession periods. That is encouraging, but even more so is the fact that the 3-month moving average is 2.3% above a year ago, the first time there has been a year-over-year increase since April 2008, 21 months ago. With all this good news, the caution is that the January index does suggest that activity was slower than implied by the December index and may imply that economic growth is slowing. The greatest advantage of this index is its ability to forecast the trend of the Federal Reserve’s Industrial Production Index that highlights the strength, or weakness, of the U.S. manufacturing sector, which plays a role as a precursor of overall economic activity.

In many of our articles outlining impressions from our annual drives between Houston and our vacation home in Rhode Island, we have discussed the number of trucks observed on the highways or at truck stops. We have used our visual impressions of over-the-road truck traffic during these trips as a reflection of the health of the economy. As Craig Manson, senior vice president and index expert for Ceridian put it, “Goods have to be transported for an economy to grow, so it will be important to monitor this index to see if the economy is really on the move.” Maybe our eyes will be replaced by this new index.

Exhibit 14. Economy Worried By Pulse Of Commerce IndexTM

Source: Ceridian Corp.; PPHB

Cool Pacific Ocean Says Greater 2010 Hurricane Season (Top)

The Australian Bureau of Meteorology reported last week that while Central Pacific Ocean temperatures remain well above El Niño thresholds and significant areas east of the international dateline continue to exceed their historical average temperature by more than 2° Centigrade, the central to eastern Pacific has continued to cool since the peak in the El Niño warmth in late December and early January. The sub-surface of the equatorial Pacific Ocean has also cooled over the last month, which historically indicates that a return to neutral conditions may be under way. The significance of this trend is its potential to impact Atlantic basin weather conditions during this year’s hurricane season. The possibility El Niño may be disappearing suggests those of us living along the Gulf Coast may need to prepare for a more active tropical storm year with more storms and more intense storms than we experienced last year. That could be bad news for energy companies operating in the Gulf of Mexico and it could be bad news for consumers.

The Australian Bureau’s view of changing weather dynamics is shared by the U.S. government’s Climate Prediction Center, which points to the cooling as a transition to neutral conditions for the upcoming Northern Hemisphere’s spring. Observations from various private weather forecasting services also support this view of a gradual ending of El Niño.

The El Niño event we have been experiencing is the strongest to have developed since the 1996-1997 event. According to commodities traders, the ending of El Niño, besides creating more havoc in the oil and gas producing region of the U.S. Gulf, may restore the normal pattern of rain that could improve crop yields and in turn lower agricultural commodity prices.

Last year’s hurricane season in the Atlantic Basin was one of the quietest since 1997. There were a total of 10 named tropical storms with four reaching hurricane strength and two of those becoming major hurricanes. Last year’s reduced activity was in line with the low level of hurricane activity experienced in 2006. The ending of El Niño, an event that has been speculated on for some time, is contributing to expectations for higher storm activity this year from the hurricane forecasting team at Colorado State University (CSU).

In CSU’s first forecast for the 2010 hurricane season made last December, the team said it expects an “above-average’ Atlantic basin tropical cyclone season. It also called for an above-average probability of a major hurricane making landfall in the U.S. and Caribbean. In preparing its forecast for hurricane activity some 6-11 months in the future, the CSU team stated that the Atlantic basin has the largest year-to-year variability of any of the global tropical cyclone basins. As such, they said it was impossible to be precise in predicting hurricane activity at such an extended range.

Even though they recognize the forecasting difficulty, the CSU team suggests their early December statistical forecast methodology shows evidence over 58 years of history of an improvement in forecasting ability compared to climatology. They acknowledged that since their December forecasts have yet to show real-time forecast skill, the CSU team has limited its forecasting to merely providing a range of estimates along with an assessment of current meteorological conditions and their impact on the year’s Atlantic basin tropical cyclone season. Despite the shortcomings of the forecast, we sense the CSU team feels pressured to issue a forecast. Our conclusion is based on their statement that, “We issue these forecasts to satisfy the curiosity of the general public and to bring attention to the hurricane problem.”

Exhibit 15. 2010 Hurricane Season To Be Much More Active

Source: Colorado State University, PPHB

The CSU forecast for 2010 calls for a more active storm year than last and more in line with the experience of 2003 or 2008. It is also noteworthy to understand the higher probabilities for landfalls. According to the CSU team, it believes the probability of a major hurricane (Category 3-4-5) hitting the entire U.S. coastline is 64% compared to the average for the last century of 52%. The probability of a storm hitting the U.S. East Coast and Florida peninsula is 40% versus the historical average of 31%. The probability of a storm hitting the Gulf Coast between the Florida panhandle and Brownsville, Texas, is 40% versus the historical average of 30%. The probability of a major hurricane striking the Caribbean is up to 53% compared to the historical average of 42%.

An interesting analysis is to consider the pattern of El Niño years and the years immediately following. The CSU December report contained a table showing El Niño years during positive Atlantic Multidecadal Oscillation (AMO) periods (1950-1969, 1995-present) and their JA-SO Multivariate Enso Index (MEI) anomalies and observed Net Tropical Cyclone (NTC) activity. The Enso Index takes into account tropical Pacific seas surface temperatures, sea level pressures, zonal and meridional winds and cloudiness.

Exhibit 16. More Active Years Follow Less Active Seasons

Source: Colorado State University, PPHB

The significance of the analysis is that none of the years following those with low storm activity has a JA-SO MEI >0.5 standard deviations. One can clearly see a pattern that suggests 2010 should follow the pattern of the previous years and produce more storm activity. To try to determine how active this year might become, the CSU team looks at analog years for help. As the table of analog years shows, anticipated storm activity calls for a higher level than in 2009 and in line with the average of the analog years.

Exhibit 17. Analog Storm Years Suggest Active Season in 2010

Source: Colorado State University, PPHB

The ACE number, which measures the potential for wind and storm surge damage, along with the NTC number is in line with the historical averages, too. We can only hope that 2010’s storm season turns out to be more like 1952 than either 1958 or 1964. We will be interested in seeing CSU’s next forecast when it is released in early April.

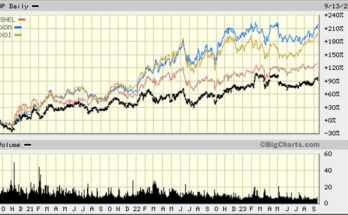

Commodity Investment Class Earns Positive Review (Top)

The 2006 academic paper that stimulated the investment boom in commodities was recently reviewed in the Financial Times. The paper, authored by two Yale finance experts, examined 45 years of price performance of commodities and equities. The paper, “Facts and Fantasies about Commodity Futures,” concluded that commodities “work well when they are needed the most” meaning they performed when equities didn’t. The benefit of this diversification performance record drove institutional investors, and then individual investors, to invest huge sums of money in commodity funds during the past few years. The historical commodities performance record helped change this niche investment sector into a standalone investment class. It was largely this investment wave that was responsible for the sharp rise in commodity prices in 2007 and the first part of 2008.

The problem was that when the stock market collapsed in the second half of 2008, commodity prices also fell. Some of the correlation was due to the lack of credit available to back these asset trades coupled with investor fear that drove them away from risky investments and to safe investments, primarily U.S. Treasury bills. The problem was this positive correlation continued in 2009. According to the Financial Times, last summer the correlation between the Standard & Poor’s 500 Index of stocks and the S&P GSCI commodity index rose to nearly 0.8. This positive correlation failed to dissuade investors who poured a record $68 billion into commodity funds last year according to Barclays Capital.

The Financial Times writer visited the professors and questioned them about the recent positive correlation of these two asset classes that materialized almost immediately after the publication of their research paper. They said the price volatility of the past two years had not dissuaded them from their view that over the long term, commodity futures returns will match equity returns but with a negative correlation. That will be a welcome change from the performance of stocks and commodities in 2008. That year, the S&P 500 lost 38% as the recession and credit crisis developed, but the GSCI commodity index fell 46%.

It will be interesting to see whether there are new research reports that analyze the correlation between equities and commodities. One wonders what role inflation and currency trends play in the relationship? The decade of the 1970s is an interesting period to examine. During that decade this nation experienced a quadrupling of crude oil prices and a rapid acceleration in inflation, yet the correlation between equities and commodities moved from hugely negative to hugely positive within the 10-year period. On the other hand, the early 1990s were marked by low crude oil and natural gas prices and a booming stock market helping to explain the highly negative correlation between the two asset classes. We think there is room for new research on this topic.

Exhibit 18. Are Commodities The Perfect Hedge?

Source: Financial Times

Contact PPHB:

1900 St. James Place, Suite 125

Houston, Texas 77056

Main Tel: (713) 621-8100

Main Fax: (713) 621-8166

www.pphb.com

Parks Paton Hoepfl & Brown is an independent investment banking firm providing financial advisory services, including merger and acquisition and capital raising assistance, exclusively to clients in the energy service industry.